2.2.1 Basic metrics based on papers and citations

Number of papers

Publish or Perish first shows the total number of papers found in Google Scholar. Please note that this is nearly always an overestimation of the total number of papers published by the academic/journal or university in question, as many papers are in fact duplicates, caused by inaccurate referencing.

Hence, the number of papers statistic and any statistics relating to it need to be interpreted with caution, unless you have carefully merged all stray citations into a master record and have unchecked all irrelevant publications.

Number of citations

Publish or Perish shows the total number of citations to papers listed for the author, journal, topic or university you search for.

The total number of citations is usually fairly accurate as it is not influenced by duplicates of the same paper. Duplicates increase the number of papers, but not the number of citations.

Years (Active)

“Years” shows the number of years since the authors or journals first article was published. This is calculated as follows: current year (first year of publication -1). Hence if someone published his or her first article in 1995 and the current year is 2010, Years is 16. This reflects the number of years in which articles could have been published.

Note that on if the search was conducted early in 2010 or if the first article was published late in 1995, this might lead to a slight overestimation of the number of years the author had been active. Hence Publish or Perish provides a conservative estimate. This does not make much of a difference for established researchers. However, for junior researchers it might mean their citations per year values are not absolutely accurate.

It is advisable to check the “Years” value to see whether it reflects common sense. Obviously, if the value is > 100 for an author, something went wrong with Google Scholar's parsing of the data. However, even finding values of > 40 for authors should lead you to be suspicious, unless the academic is close to retirement. A very easy way to check for offending publications is to sort the data by year. For a detailed example, see Section 3.3.5.

Average number of citations per paper

The average number of citations per paper is calculated by dividing the total number of citations by the total number of papers.

This can be a very useful metric to assess the average impact for a journal or author. However, it is only correct if you have carefully merged all stray citations into a master record and have unchecked all irrelevant publications.

Average number of citations per year

The average number of citations per year is calculated by dividing the total number of citations by the number of years the author or journal has been publishing papers. This can be a very useful metric to assess the yearly impact for a journal or author. It compensates for the time an academic/journal has been active, which provides a fairer comparison for junior academics. As such, it can be used as an alternative to the contemporary h-index.

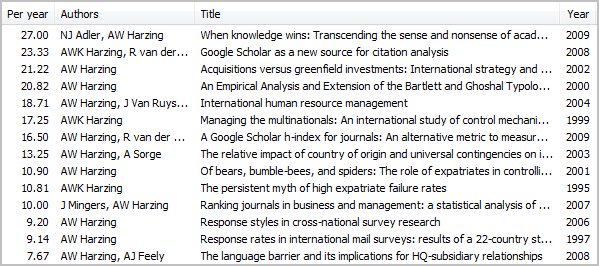

The unnamed lower list of the results panel also includes the number of citations per year for each individual paper of the author or journal in question. This allows one to assess the impact of each paper when corrected for its age. Sorting on the citation per year column allows one to assess an authors or journals most influential publications (see screenshot).

Average number of citations per author

The average number of citations per author is calculated by first dividing the number of citations for each publication by the number of authors for that publication. Subsequently the resulting citations are added up.

The resulting number of citations can be seen as the single-authored equivalent for the author or journal in question. For authors, the average number of citations per author therefore gives a fairly good picture of an academics individual impact and can be used as an alternative to the individual h-index. Please note though that the h-index incorporates both impact and productivity.

Average number of papers per author

The average number of papers per author is calculated by first dividing each publication by the number of authors, resulting in a number between 0 and 1 (sole authorship). Subsequently, these fractional author counts are added up.

The resulting number of papers can be seen as the single-authored equivalent for the author or journal in question. For authors, the average number of papers per author therefore gives a fairly good picture of an academics individual productivity and can be used as an alternative to the individual h-index. Please note though that the h-index incorporates both impact and productivity.

Average number of authors per paper

The average number of authors per paper is calculated by adding up the total number of authors involved in the result set for the author or journal in question and dividing this by the number of papers. It gives an indication of the extent to which an author or journal publishes sole authored or co-authored articles. However, as single publications with a large number of authors can increase this metric substantially, it is not as good a reflection of an authors individual productivity as the average number of papers per author.

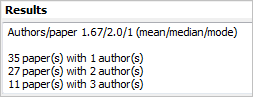

At the bottom of the unnamed upper-field of the results panel (use the scroll bar to make this part visible), you will also find the median and mode for the number of authors per paper (see screenshot). Although the median does not usually provide much additional information, especially for authors/journals with a relatively low number of co-authors, the mode provides a good assessment of the authors tendency for co-authorship.

Finally, an analysis is shown of the number of papers with a certain number of authors. The screenshot above, which shows my own publication statistics, indicates clearly that I most frequently tend to publish alone or with one co-author.

Worked example: What can one conclude from simple metrics?

Although most bibliometric analyses tend to focus on complex metrics such as the h-index and its variations, there is a lot one can learn from comparing relatively simple metrics.

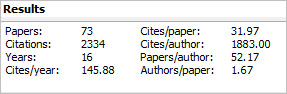

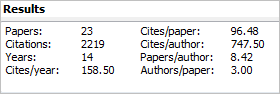

|

|

The screenshot above compares my own publication record (left) with that of a colleague (right), chosen because her total number of citations and time since first publication are very similar to mine. As a result, our number of citations per year is fairly similar.

However, it is clear that we have followed different publication strategies. I have published almost three times as many papers as my colleague. An important reason is that she has focused mainly on publications in top US journals, whilst I have published in a wider range of journals. I have also published books and book chapters as well as white papers that attract citations. As a result the number of citations per paper is much higher for my former colleague than for me. This could be seen as evidence of publishing higher quality papers.

However, she has also published with a larger number of co-authors. As a result, my number of “single-authored” citations (cites/author) is 2.5 times as high as hers. The difference in the number of single-authored equivalent papers is even larger, with my record showing 6 times as many single-authored equivalents. This is also reflected in the average number of authors per paper. For my colleague the mean, median and mode are all 3.00.

Neither of these strategies is inherently better than the other. They just reflect different approaches to publishing, which might be shaped by factors such as type and country of doctoral training, country of employment and personal temperaments.

The variety of metrics provided by Publish or Perish allows one to select the metrics most appropriate to ones purpose. However, there are metrics that are designed to combine both productivity (number of papers) and impact (number of citations). The h-index, which will be discussed below, is the most important of these metrics.